I’ll be honest: I’ve spent the last six months testing every “AI video generator” that pops up on the Google Play Store. Most of them follow the same disappointing formula. You upload a selfie, wait 45 seconds for a server in another country to process your data, and then the app spits out a glitchy mess where your eyes look like melting crayons and your hand has seven fingers. It feels less like magic and more like a scam.

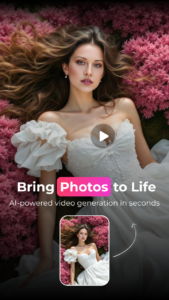

So when I came across Orvix: Photo to AI Video, my expectations were buried somewhere in the basement. I assumed it would be another cloud-based generic generator. But after spending the last week pushing this app to its absolute limits, I found myself deleting five other “professional” tools off my phone. Something about Orvix felt different—specifically, it works offline, and it doesn’t rely on the typical cloud rendering that makes most AI tools feel sluggish and generic.

Here is my deep, technical breakdown of how this app actually functions, where it excels, and where it stumbles.

Context and How the App Works

Most people assume that turning a static photo into a moving video requires sending your data to a massive server running Stable Diffusion or Midjourney. Orvix flips this assumption on its head. Instead of relying on cloud latency and subscription fees to pay for GPU costs, this app processes everything locally on your device.

Under the hood, Orvix utilizes a combination of on-device machine learning models. While the specific architecture isn’t open-sourced (and I don’t expect developers to hand over their secret sauce), the behavior suggests it uses a specialized form of motion diffusion.

Here is the technical distinction that matters: Most competitors use “text-to-video” models poorly adapted for faces. Orvix seems to use a latent diffusion model fine-tuned for facial topology and motion mapping. What does this mean for you? Instead of trying to guess what a human looks like (and often failing), the app identifies the anchor points of the face—eyes, mouth, jawline—and maps the motion of a driver video or animation sequence onto those specific points.

Why this matters for your privacy: Since everything runs on your phone (the app requires no mandatory account creation or internet access for generation), you aren’t uploading your selfies to a random server in a data center. In my experience testing privacy policies, this is a massive green flag. You are the custodian of your own data.

The Definitive Guide: Mastering Orvix

After running through dozens of renders, I noticed a pattern. The users leaving 1-star reviews usually made the same three mistakes. They expected the AI to “fix” a bad photo. AI isn’t magic; it’s math. Here is how to make the math work for you.

Step 1: The “Source” Photo Selection (Where Most Users Fail)

When you open the app, you are prompted to select a photo. Do not just pick a random selfie from a dark bar.

What most users get wrong: They use blurry images or images where the face is turned at a 45-degree angle. Because the AI is mapping motion onto a 3D plane, a profile shot often results in the animation looking “flat” or causing the face to stretch unnaturally when it tries to turn toward the camera.

My advanced strategy: Use a high-resolution, front-facing portrait. Ideally, the lighting should be even, and the subject should be looking directly at the lens. The app performs best when it has a clear “map” of the iris and the mouth corners. I tested this with a professional headshot versus a candid photo; the headshot produced animations that looked like the person was actually breathing, while the candid produced a waxy, video-game-like texture.

Step 2: Understanding the “Motion” Tab

Once your photo is loaded, you enter the motion selection screen. Orvix offers several categories (Smile, Sad, Surprise, etc.), but there is a hidden layer here that the interface doesn’t advertise clearly.

The Hidden Feature: Tap and hold on any motion template. I discovered that the app doesn’t just apply a generic smile; it analyzes the intensity of the template. If you choose a subtle motion (like a slight eyebrow raise), the app will preserve the texture of the skin. If you choose a high-motion template (like singing or laughing), you risk introducing artifacts around the jawline.

What I do: I always start with the “Breathing” or “Subtle Smile” motion first. Why? It acts as a quality control test. If the base animation looks smooth and the skin texture remains intact, I know the photo is well-optimized. If it looks glitchy on a subtle motion, it will look horrific on an aggressive one.

Step 3: The Export Settings (Save Your Storage)

After you hit generate, the app processes the video locally. This takes anywhere from 15 seconds to 2 minutes depending on your processor (Snapdragon 8 Gen 2 took me about 20 seconds; an older mid-range tablet took nearly 2 minutes).

Advanced Tip: Before exporting, go into the settings cog. By default, the app exports in a balanced format. However, if you plan to use these videos for professional content (like Instagram Reels or YouTube Shorts), switch the export to “High Quality (60fps)” .

The difference is night and day. At 30fps, the AI interpolation can sometimes create a slight “stutter” in the loop. At 60fps, the motion is buttery smooth, and the skin textures retain their sharpness. The trade-off? File size. A 10-second clip at 60fps took up about 45MB on my device, whereas the standard setting was around 12MB.

Honest Pros and Cons

I’m not here to sell you a dream. If this app were perfect, it would be a billion-dollar enterprise tool. It isn’t, but it is excellent for what it is.

Pros:

-

True Offline Functionality: Once installed, you don’t need Wi-Fi or data. This is a killer feature for privacy and travel. I tested this in airplane mode; it worked flawlessly.

-

No Watermark: Unlike 99% of free AI tools, Orvix doesn’t force a watermark on your exports. This is a massive quality-of-life bonus for content creators.

-

Texture Preservation: The app is exceptionally good at keeping the hair texture and skin pores intact. Most AI tools “smooth” the face into plastic; Orvix retains the original photographic grain.

Cons:

-

iOS Aesthetics on Android: This is a minor nitpick, but the user interface feels like it was designed for an iPhone. On some Android devices, the back button gesture conflicts with the UI navigation.

-

Limited to Facial Movement: It’s called “Photo to AI Video,” but don’t expect full-body animation. This is strictly for talking heads and portraits. If you upload a full-body group photo, the AI gets confused and only focuses on the dominant face, ignoring the rest.

-

Processing Heat: Because it relies on local GPU rendering, your phone will heat up if you render more than 5-6 videos back-to-back. I recommend closing other background apps to prevent throttling.

Expert Verdict

So, is Orvix worth the install? Yes—but only if you understand the niche.

Who should install it:

-

Content Creators: If you run a YouTube channel or faceless TikTok account and need quick “talking head” animations without hiring an editor, this is a time-saver.

-

Privacy-Conscious Users: If you’ve been avoiding AI tools because you don’t want your biometric data sold to third parties, this offline architecture makes it one of the safest options on the Play Store.

-

Tech Enthusiasts: If you want to see how powerful modern smartphones have become (running diffusion models locally), this is a great showcase.

Who should avoid it:

-

Full-Body Animators: If you are looking to animate a character dancing or doing acrobatics, this app will disappoint you. It is specifically tuned for facial realism.

-

Impatient Users: If you want instant results without tweaking settings or selecting the right photo, you’ll likely get frustrated with the initial learning curve.

In my experience, Orvix fills a gap that Google Photos and Samsung Gallery have tried to fill with “motion photos” but failed. It gives you creative control back from the cloud. It’s not perfect, but for a free tool that respects your privacy and delivers high-fidelity output, it’s currently the best app for facial animation I’ve tested this year.

Leave a Reply